Table of contents

- What risk classes are there in the AI Act?

- Who is responsible for the risk classification of AI?

- Risk classification as a structured process

- How do I classify AI applications in a structured way?

- How do I classify AI models in a structured way?

- How should I document the AI risk classification?

- Why is AI risk classification not a one-time task?

What risk classes are there in the AI Act?

Five risk classes can be derived from the AI Act.

Prohibited practices (Art. 5 AI Act)

The AI Act first defines a number of practices in the field of artificial intelligence that are completely prohibited due to their unacceptable risk to the fundamental rights and values of the EU.

These include AI systems that use one or more of the following practices:

- Subliminal influence

- Exploitation of vulnerabilities

- Social scoring

- Prediction of criminal offenses

- Creation of facial recognition databases

- Emotion recognition in the workplace and in educational institutions

- Biometric categorization

- Real-time remote biometric identification in public spaces for law enforcement purposes

High-risk AI (Art. 6 ff. AI Act)

High-risk AI systems pose a significant risk to the health, safety, and fundamental rights of EU citizens. Accordingly, the compliance requirements for this risk class are particularly strict and comprehensive.

There are basically two approaches to classification as high-risk AI:

Embedded AI

An AI system is considered high-risk AI if it is subject to a conformity assessment, either independently or as a safety component of another product, due to existing EU harmonization regulations. The relevant harmonization regulations are listed in Annex I of the AI Act.

Put simply, this applies to certain AI applications that are integrated into physical products, such as machines or motor vehicles.

The complete list from Annex I can be found on the EU's online portal

Stand-alone AI used in particularly sensitive areas

In addition, Annex III of the AI Act lists areas of application that also lead to classification as high-risk AI. These include the following areas, among others:

- Biometric identification

- Critical infrastructure (e.g., road traffic, water, gas, electricity)

- Access to education and government services

- Assessment of examinations or work performance

- Hiring and firing of employees

- Access to important private and public services (e.g., credit rating, risk assessment, and pricing of life and health insurance)

- Law enforcement and migration

- Administration of justice and democratic processes (e.g., election interference)

Annex III of the AI Act can also be found on the EU's online portal.

Medium risk (Art. 50 AI Act)

Medium-risk AI systems include applications that interact directly with humans. The AI Act sets out specific transparency obligations for providers and deployers of such "specific AI systems." In practice, this mainly concerns chatbots and AI applications that are capable of creating media content.

Medium-risk AI systems meet one of the following criteria:

- Direct interaction with humans

- Generation of synthetic content (audio, image, video, or text content)

- Emotion recognition or biometric categorization

- Creation of deepfakes

AI models with systemic risk (Art. 55 AI Act)

AI models for general use constitute a separate category in the AI Act. Unlike the other risk categories, the focus here is not on specific use cases, but on the underlying models themselves.

AI models for general use that demonstrate particularly high performance are classified as AI models with systemic risk. This classification is based on technical characteristics that are yet to be specified by the EU Commission. However, systemic risk is already assumed if the computing power used to train the model exceeds 10²⁵ FLOPs (floating point operations).

The website of the Epoch AI research institute provides an overview of published AI models and their computing power.

Low risk

Even if an AI system is classified as low risk, providers and operators still have a responsibility: The AI Act requires that employees and contractors have sufficient AI literacy. In addition, pursuant to Article 95 of the AI Act, the EU supports the development of codes of conduct and governance mechanisms by the industry to ensure the responsible use of AI.

Important: The risk classes in the AI Act are cumulative, which means that an AI application can be assigned to several risk categories at the same time.

Who is responsible for the risk classification of AI?

There is no specific legal requirement for responsibility for AI risk classification, so companies must define responsibilities independently and develop appropriate processes for evaluating and classifying their AI systems.

In practice, responsibilities are regulated in an internal AI policy.

Since risk classification determines the compliance requirements of an AI system or AI model and incorrect classification can result in fines, this process should be led by knowledgeable experts.

It makes sense to involve various departments, including

- AI officer

- Legal department / General Counsel

- Data protection officer, if applicable

- IT department (e.g., IT asset manager)

- Specialist departments that use the respective AI: Due to the importance of the purpose

Risk classification as a structured process

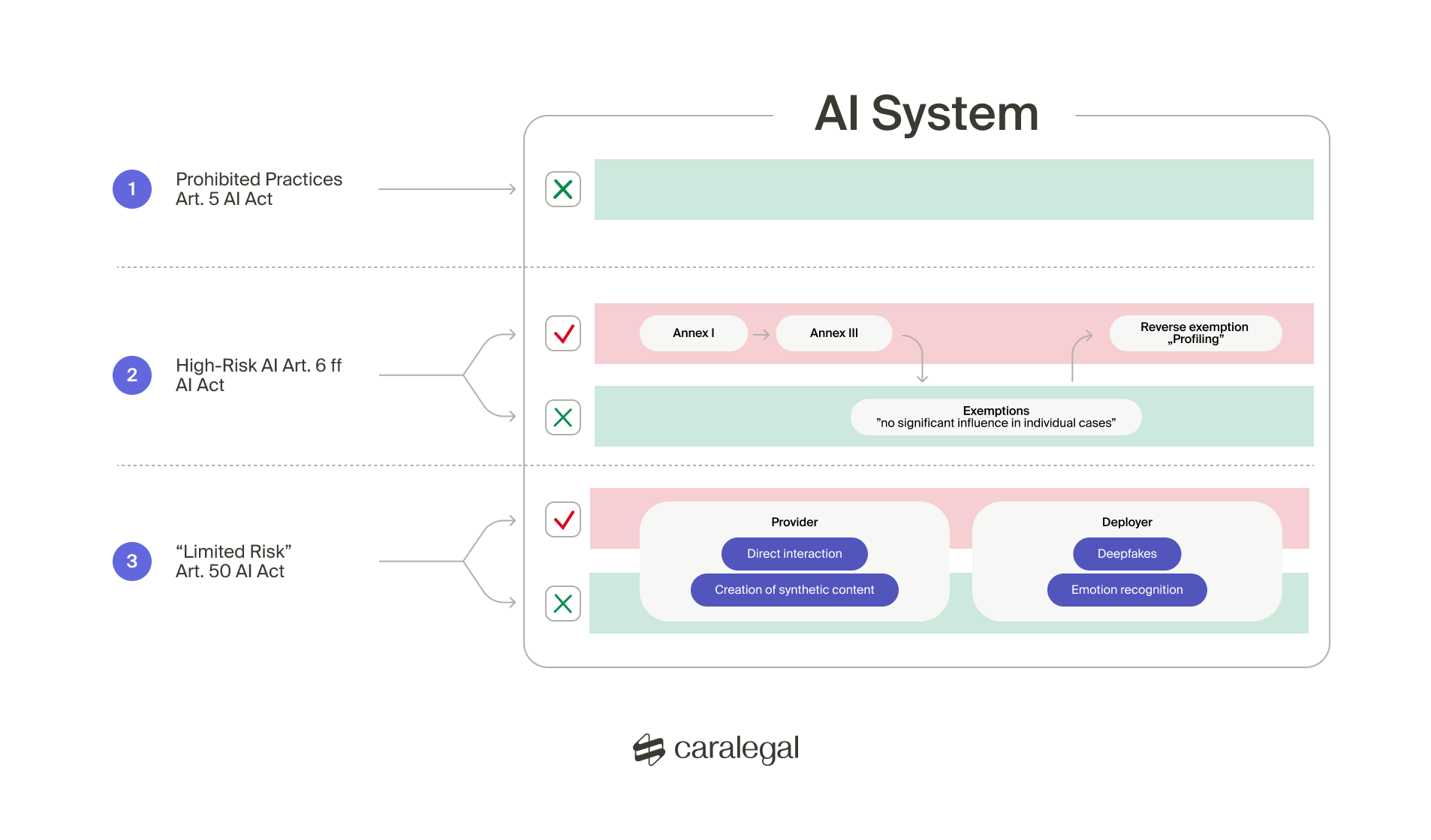

As already mentioned, the risk classes can apply cumulatively. In practice, this means that every AI application or AI model should be checked for all possible risk categories. To make the review process more efficient, it makes sense to distinguish between the AI application and the underlying AI model.

How do I classify AI applications in a structured way?

If it is an AI application (also known as an AI system), the following risk classes must be considered:

- Prohibited AI practices

- High-risk AI

- Medium risk

- Low risk

The first step is to check whether the AI application uses any of the prohibited practices listed in Article 5 of the AI Act.

The second step is to assess whether the AI application falls within the scope of high-risk AI. The following questions must be clarified:

- Is the AI application a product or a safety-related component of a product that must undergo a conformity assessment in accordance with EU harmonization regulations (Annex I of the AI Act)?

- Does the application fall within one of the fields of use defined in Annex III of the AI Act?

Important: Specific exemptions and reverse exemptions apply to the fields of application listed in Annex III. If the AI application does not have a significant influence on the outcome of the decision-making process in individual cases, an exemption may apply. However, if profiling is carried out, a reverse exemption applies and the application must be classified as high-risk in all cases.

The third step is to check whether the AI application falls under the provisions of Article 50 of the AI Act and is therefore classified as medium risk. The decisive factor here is interaction with natural persons. In this context, transparency obligations arise for providers or operators for each AI system.

Transparency obligations for deployers apply to AI systems that create deepfakes or perform emotion recognition and biometric categorization.

Transparency obligations for providers apply to AI systems that interact directly with natural persons or generate synthetic content.

In the fourth – voluntary – test step, it is possible to assess whether there is a low risk. This is based on guidelines that companies can comply with on a voluntary basis. Specific guidelines on this have not yet been published.

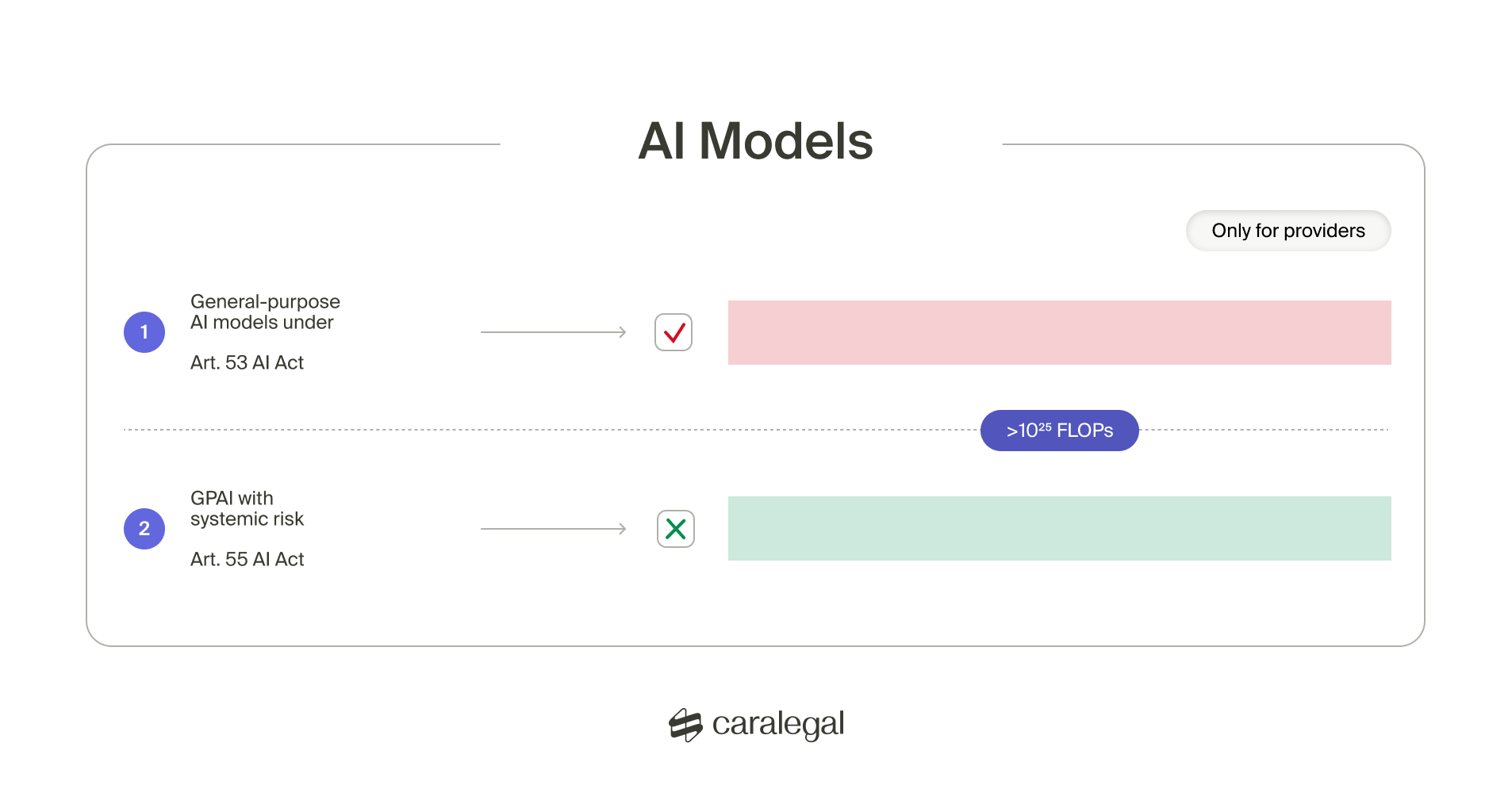

How do I classify AI models in a structured way?

If it is an AI model for general use, two risk classes must be considered:

- Systemic risk

- Low risk

The first step is to clarify whether there is a systemic risk. The risk classification of such AI models depends on whether the model has high-impact capabilities. The key indicator for this assessment to date is the cumulative computing power used to train the model, measured in floating point operations (FLOPs).

If the computing power exceeds 10²⁵ FLOPs, the model is classified as a systemic risk.

Additional criteria are set out in Annex XIII of the AI Act. Another indication of high effectiveness is if the model is used by more than 10,000 commercial users in the EU.

Important: The obligations for AI models for general use are directed exclusively at the providers of these models.

How should I document the AI risk classification?

Given the legal implications of risk classification for AI systems, the review process should be carefully and comprehensively documented, especially when it comes to determining whether or not an AI system is high-risk.

It is advisable to maintain an AI register that includes the following information, among other things:

- Intended purpose of the AI application

- Description of how the AI application works

- Internal responsibilities for development, operation, and monitoring

- AI asset description

In addition, you should document how each risk classification was made in a comprehensible manner. This evidence should also be available for external review, for example by supervisory authorities, if necessary.

A legal opinion that takes all potential risk categories into account can be a valuable aid in making the classification process legally compliant.

Why is AI risk classification not a one-time task?

The risk classification of your AI application is not a one-time task – it can change over time due to internal and external factors. Therefore, classification should be understood as a continuous process and reviewed regularly.

- Changes in intended use

AI systems, similar to traditional software, are dynamic and constantly evolving. New areas of application or functional enhancements can change the original purpose. This may require reclassification, as the risk rating is based on the specific use. - New legal developments

The legal framework is also changing. The EU Commission and designated bodies are expected to publish new guidelines on the classification of AI systems in the coming months and years, particularly for high-risk AI. In addition, the areas defined in Annexes I and III may be subject to ongoing adjustments, which may have an impact on risk classification.

The same applies to the other risk classes: the AI Act deliberately gives the EU legal leeway for future technological developments so that it can respond flexibly to innovations.

Conclusion:

To ensure legal certainty and minimize compliance risks, it is advisable to establish risk classification as a recurring process. This will enable companies to respond promptly to changes in the intended purpose or regulatory environment.