Table of contents

- What is ISO/IEC 42001:2023 about?

- Who is ISO/IEC 42001:2023 intended for?

- Why is ISO/IEC 42001:2023 relevant today?

- What does the management system according to ISO/IEC 42001:2023 cover?

- What does ISO/IEC 42001:2023 require and how does it overlap with the AI Act?

- What are the arguments in favor of implementing ISO/IEC 42001:2023?

- Conclusion

What is ISO/IEC 42001:2023 about?

The increasing integration of artificial intelligence (AI) in companies brings not only opportunities, but also new challenges in terms of transparency, security, ethics, and governance.

ISO/IEC 42001:2023 offers the first internationally uniform framework for systematically addressing these challenges. The standard specifies requirements for the establishment, implementation, and continuous improvement of an AI management system in organizations, regardless of industry or company size.

In particular, the following objectives are pursued:

- Promoting the responsible use of AI

- Ensuring transparency, traceability, and explainability of AI systems

- Risk management throughout the entire AI life cycle

- Supporting compliance with legal (e.g., AI Act) and ethical requirements

Who is ISO/IEC 42001:2023 intended for?

The standard is aimed at all organizations that develop, provide, or use AI systems, regardless of industry or size. It is particularly relevant for companies that are subject to regulatory requirements, such as the AI Act, and are therefore obliged to implement systematic AI risk management.

The standard creates a structured framework for implementing these requirements, especially for companies that develop, offer, or use high-risk AI systems as defined by the AI Act. Organizations in highly regulated or safety-critical industries – such as healthcare, finance, or public administration – also benefit from ISO/IEC 42001:2023, as it enables a traceable and verifiable approach to compliance with legal requirements.

Why is ISO/IEC 42001:2023 relevant today?

With the AI Act coming into force, ISO/IEC 42001:2023 is becoming more prominent: it supports companies in implementing key requirements of the regulation – such as risk management, transparency, monitoring, and documentation – in a structured manner. The standard not only provides specific requirements, but also helpful guidance on combining technical and legal requirements.

Furthermore, it is also relevant regardless of regulatory pressure: Organizations that apply the standard document their responsible use of AI, build trust among customers, partners, and regulatory authorities – and thus secure a sustainable competitive advantage.

Information Deep Dive:

Would you like to know how the technical requirements for high-risk AI can be implemented in practice?

Our white paper on the technical implementation of the AI Act (curently only available in German language) shows how international standards and appropriate measures are applied in practice, including a clear use case and system overview.

What does the management system according to ISO/IEC 42001:2023 cover?

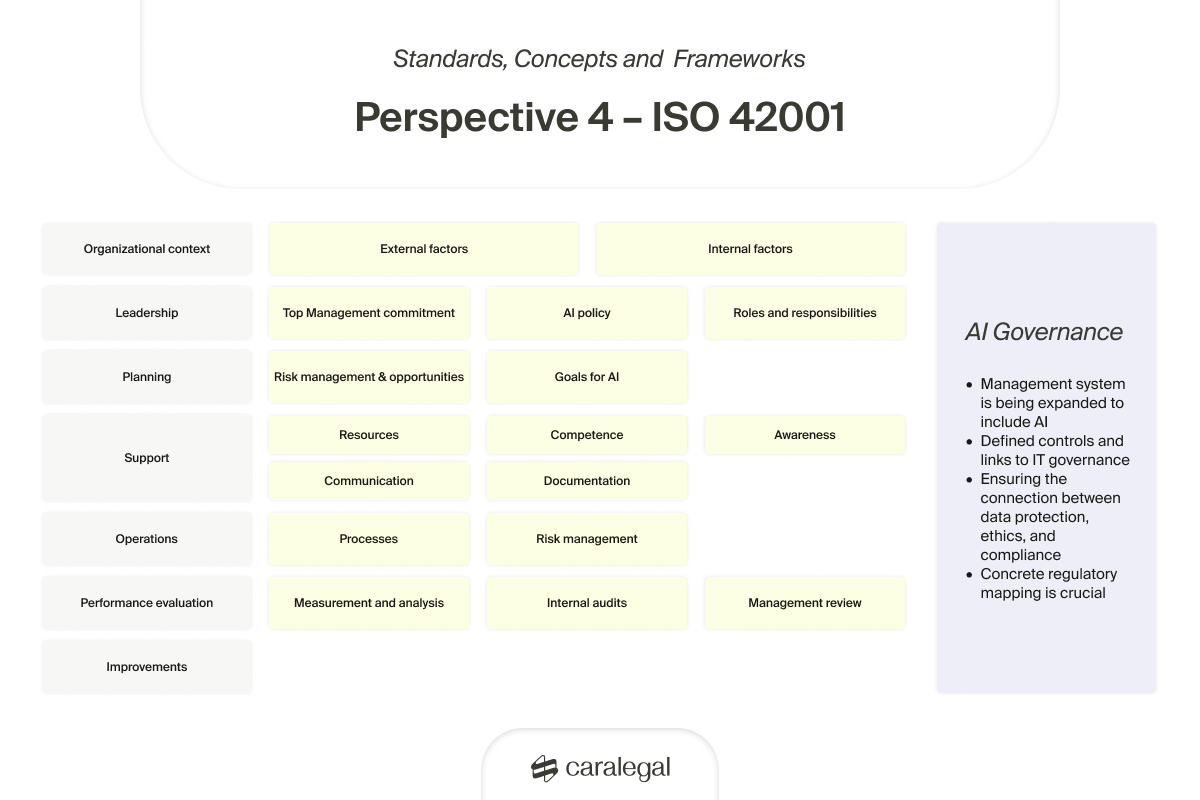

ISO/IEC 42001:2023 is divided into seven main sections, which together represent the life cycle of a functioning AI management system.

These include:

- Context of the organization

- Leadership

- Planning

- Support

- Operation

- Performance evaluation

- Improvement

These core areas are supplemented by several annexes (Annex A to D), which contain practical guidelines for implementing the requirements and are therefore particularly relevant for the operational application of the standard.

Overview of the contents of ISO/IEC 42001

What does ISO/IEC 42001:2023 require and how does it overlap with the AI Act?

The standard sets out a number of specific requirements for the implementation of an AI management system. In this section, we provide an overview of the key content and show you where it intersects with the requirements of the AI Act.

The sections "Context of the organization" and "Improvement" are not included in this overview, as the focus is on the core of the standard: the establishment, control, and implementation of an effective AI management.

Leadership

A key requirement in the area of leadership is the clear anchoring of the AI management system at the highest management level. Company management is responsible for ensuring that the system is integrated into existing business processes and equipped with the necessary resources such as time, (human) resources, and budget. It is also involved in the implementation and continuous improvement of the system.

In addition, senior management should formulate binding AI guidelines and clearly define and communicate responsibilities in AI management. These two measures form the foundation for clear goals, roles, and processes and are essential for a resilient and sustainable management system.

Even though the AI Act does not contain any explicit requirements for leadership, the reference is clear: establishing a robust framework at the management level facilitates the implementation of key regulatory requirements, such as the establishment of a quality management system in accordance with Art. 17 of the AI Act.

Fulfilling this requirement directly contributes to overall AI compliance and, by anchoring it at the management level, offers the lowest-risk approach to minimizing liability risks.

Planning and operation

Key requirements in this area relate to AI risk assessment and treatment. Organizations must define how risks associated with their AI systems are systematically identified, assessed, and subsequently minimized through appropriate measures.

In addition, the standard requires an AI system impact assessment. This involves analyzing and documenting the potential impact of the AI systems used on individuals and society as a whole, based on a structured, traceable process.

There are clear overlaps in content with the AI Act:

- For high-risk AI systems, the AI Act prescribes a mandatory risk management system (Art. 9 AI Act).

- In addition, it requires a fundamental rights impact assessment (Art. 27 AI Act).

Both requirements can be directly addressed by implementing ISO/IEC 42001:2023, making the standard an effective tool for fulfilling legal obligations.

Support

The most important requirements in this area are to provide sufficient resources and competencies for AI management and to create documentation on the AI management system. Organizations must ensure that the people involved in AI have the necessary professional qualifications.

In addition, the standard requires documentation of certain elements of the management system. These include relevant processes, identified AI risks, and the resulting risk treatment measures.

Here, too, are parallels between ISO/IEC 42001:2023 and the AI Act:

- According to Art. 4 AI Act, providers and operators of AI systems must ensure that their personnel have sufficient AI competence.

- In addition, Art. 9 (1) AI Act requires the documentation of the risk management system - a requirement that is consistent with the provisions of ISO/IEC 42001:2023 and can be effectively fulfilled by its implementation.

What do the annexes contain?

ISO/IEC 42001:2023 contains a total of four annexes (Annex A to D) that provide key guidance for the practical implementation of the standard:

- Annex A lists specific measures for addressing AI risks.

- Annex B provides implementation guidance on the risk-minimizing measures from Annex A.

- Annex C contains examples of security objectives and threat scenarios to support risk analysis.

- Annex D describes how an AI management system can be used across sectors and integrated into other management systems (and associated ISO standards).

It is particularly important to distinguish between the binding nature of the standards:

- Annexes A and B are normative, i.e., they are binding for certification and thus part of official audits.

- Annex C and D, on the other hand, are informative, i.e., their application is voluntary and they serve as guidance.

What are the arguments in favor of implementing ISO/IEC 42001:2023?

Organizations should implement ISO/IEC 42001:2023 if they want to introduce, operate, and continuously develop a structured AI management system, whether for regulatory or strategic reasons.

The standard is particularly relevant for companies seeking ISO certification: with a certified AI management system, organizations can demonstrate to the outside world that they use AI responsibly, compliantly, and transparently, thereby setting a strong signal to customers, partners, and investors that brings a clear competitive advantage.

Another practical advantage lies in the structure of the standard: thanks to its alignment with the High Level Structure (HLS), ISO/IEC 42001:2023 can be easily integrated into existing management systems or combined with standards such as ISO 9001 (quality management), ISO 27001 (information security), or ISO 37301 (compliance management).

In addition, the standard is industry-independent and applicable to organizations of all sizes - from start-ups with AI products to established companies in highly regulated environments.

Conclusion

As can be seen, ISO/IEC 42001:2023 can be a central building block for effective AI management. Those who apply the standard anchor AI compliance not only selectively, but across the entire life cycle of their AI applications. This makes the responsible use of AI measurable, traceable, and verifiable to third parties.

This standard therefore not only provides regulatory certainty, but also strengthens trust in AI applications, both internally and externally. Organizations that focus on structured AI governance at an early stage can secure decisive advantages in an increasingly regulated market.